Last week I helped a JasperFx Software client with a use case where they get a steady stream of related events from an upstream system into a downstream system where order of processing is important, but the messages might arrive out of order.

Once again referring to the venerable Enterprise Integration Patterns book, that scenario requires a Resequencer:

How can we get a stream of related but out-of-sequence messages back into the correct order?

To solve the message ordering challenge, we introduced the new Resequencer Saga feature into Wolverine, and combined that with the existing “Partitioned Sequential Messaging” feature.

For the new built in re-sequencing, we do need you to implement this interface on any message types in that related stream so that Wolverine “knows” what order the message is inside of a related stream:

public interface SequencedMessage{ int? Order { get; }}

The next step is to use a special kind of new Wolverine Saga called ResequencerSaga<T>, where the T is just some sort of common interface for all the message types that are part of this ordered stream and also implements the SequencedMessage shown above. Here’s a simple example I used for the testing:

public record StartMyWorkflow(Guid Id);public record MySequencedCommand(Guid SagaId, int? Order) : SequencedMessage;public class MyWorkflowSaga : ResequencerSaga<MySequencedCommand>{ public Guid Id { get; set; } public static MyWorkflowSaga Start(StartMyWorkflow cmd) { return new MyWorkflowSaga { Id = cmd.Id }; } public void Handle(MySequencedCommand cmd) { // This will only be called when messages arrive in the correct order, // or when out-of-order messages are replayed after gaps are filled }}

At runtime, when Wolverine gets a message that is handled by that MyWorkflowSaga, there is some middleware that first compares the declared order of that message against the recorded state of the saga so far. In more concrete terms, if…

- It’s the first message in the sequence, Wolverine just processes it as normal and records in the saga state what the last processed message order was so that it “knows” what message sequence should be next

- It’s a later message in the sequence compared to the last message sequence processed, the saga state will just store the current message, persist the saga state, and otherwise skip the normal message processing

- The message is the next in the sequence according to what the saga state says should be processed next, it processes normally. If there are any previously out of order messages that the saga state already knows about that are sequentially next after the current message, Wolverine will re-publish those messages locally — but with the normal Wolverine message sequencing these cascading messages will not go anywhere until the initiating message completes

With this mechanism, Wolverine is able to put the messages arriving from the outside world back into the correct sequential order in its own processing.

Of course though, this processing is very stateful and somewhat likely to be vulnerable to concurrent access problems. Most of the saga storage mechanisms in Wolverine happily support optimistic concurrency around saving saga state, so you could just use some selective retries on concurrency violations. Or better yet, Wolverine users can just about completely side step issues with concurrency by utilizing our newest improvement to partitioned messaging we’re calling “Global Partitioning.”

Let’s say that you have a great deal of operations in your system that have to modify a resource of some sort like an entity, a file, a saga in this case, or an event stream that might be a little bit sensitive to concurrent access. Let’s also say that you have a mix of messages that impact these sensitive resources that come from both external, upstream systems and from cascaded messages within your own system.

The syntax for this next feature was added just today in Wolverine 5.21 as I realized the previous syntax was basically unusable in the course of trying to write this blog post. So it goes.

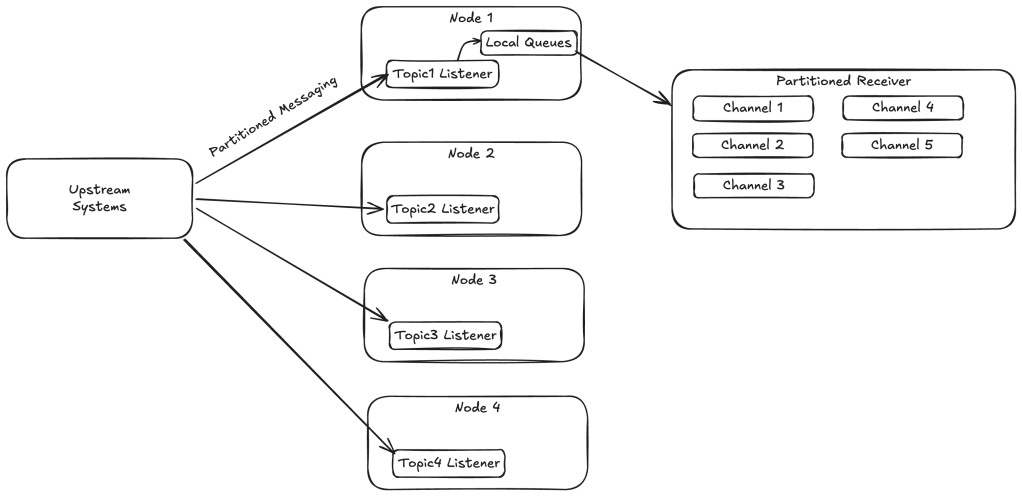

A “global partitioning” allows you to create a guarantee that messages impacting those resources can be processed sequentially within a message group while allowing for parallel processing between message groups throughout the entire cluster.

Imagine it like this (but know I drew this diagram for someone using Kafka even though the next example is using Rabbit MQ queues):

And with this configuration:

using var host = await Host.CreateDefaultBuilder() .UseWolverine(opts => { // You'd *also* supply credentials here of course! opts.UseRabbitMq(); // Do something to add Saga storage too! opts .MessagePartitioning // This tells Wolverine to "just" use implied // message grouping based on Saga identity among other things .UseInferredMessageGrouping() .GlobalPartitioned(topology => { // Creates 5 sharded RabbitMQ queues named "sequenced1" through "sequenced5" // with matching companion local queues for sequential processing topology.UseShardedRabbitQueues("sequenced", 5); topology.MessagesImplementing<MySequencedCommand>(); }); }).StartAsync();

What this does is spread the work out for handling MySequencedCommand messages through five different Rabbit MQ + Local queue pairs, with each pair active on only one single node within your application. Even inside each local queue in this partitioning scheme, Wolverine is parallelizing between message groups.

Now, let’s talk about receiving any message that can be cast to MySequencedCommand. If the message is received at a completely different listener than the “sequenced1/2/3/4/5” queues defined above, like from an external system that knows absolutely nothing about your message partitioning, Wolverine is going to immediately determine the message group identity by inferring that from the saga message handler rules (that’s what the UseInferredMessageGrouping() option does for us), then forwards that message to the proper node that is currently handling that group id. If the current node happens to be assigned that message group id, Wolverine forwards the message directly to the right local queue.

Likewise, if you publish a cascading message inside one of your handlers, Wolverine will determine the message group id for that message type, then try to either route that message locally if that group happens to be assigned to the current node (and it probably would be if you were cascading from your own handlers) or sends it remotely to the right messaging endpoint (Rabbit MQ queue or a Kafka topic or an AWS SQS queue maybe).

The point being, this guarantees that related messages are processed sequentially across the entire application cluster while allowing parallel processing between unrelated messages.

Summary

These are hopefully two powerful new features that will benefit Wolverine users in the near future. Both of these features were built at the behest of JasperFx Software clients to directly support their current work. I’m very happy to just quietly fold in reasonably sized new features for JasperFx support clients without extra cost when those features likely benefit the community as a whole. Contact us at sales@jasperfx.net to find out what we can do to help your software development efforts be more successful.

And just for bragging rights tonight, I did some poking around (okay, I asked Claude to do it for me) to see if any other asynchronous messaging tools offer anything similar to what our global partitioning option does for Wolverine users. While you can certainly achieve the same goals through actor frameworks like AkkaDotNet or Orleans (I consider actor frameworks to be such a different paradigm that I don’t really think of them as direct competitors to Wolverine), it doesn’t appear that there are any equivalents out there to this feature in the .NET space. MassTransit and NServiceBus both have more limited versions of this capability, but nothing that is as easy or flexible as what Wolverine has at this point. Now, granted, we’re at this point because Marten event stream appends can be sensitive to concurrent access so we’ve had to take concurrency maybe a little more seriously than the pure play asynchronous messaging tools that don’t really have an event sourcing component.