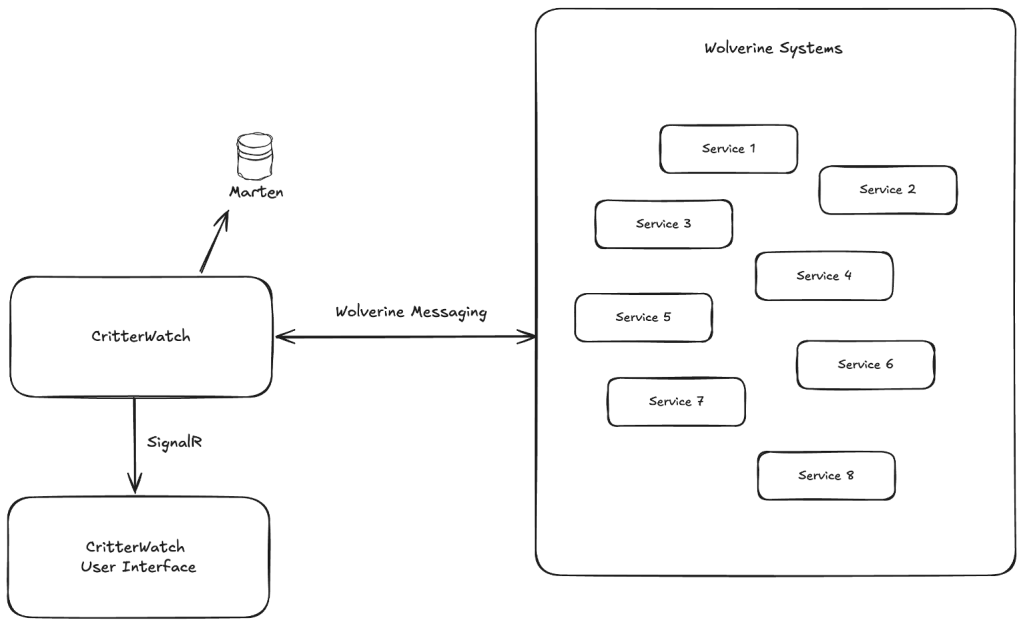

I’m working pretty hard this week and early next to deliver the CritterWatch MVP (our new management and observability console for the Critter Stack) to a JasperFx Software client. One of the things we need to do for testing is to fake out several failure conditions in message handlers to be able to test CritterWatch’s “Dead Letter Queue” management and alerting features. To that end, we have some fake systems that constantly process messages, and we’ve rigged up what I’m going to call the world’s crudest Chaos Monkey in Wolverine middleware:

public static async Task Before(ChaosMonkeySettings chaos)

{

// Configurable slow handler for testing back pressure

if (chaos.SlowHandlerMs > 0)

{

await Task.Delay(chaos.SlowHandlerMs);

}

if (chaos.FailureRate <= 0) return;

// Chaos monkey — distribute failure rate equally across 5 exception types

var perType = chaos.FailureRate / 5.0;

var next = Random.Shared.NextDouble();

if (next < perType)

{

throw new TripServiceTooBusyException("Just feeling tired at " + DateTime.Now);

}

if (next < perType * 2)

{

throw new TrackingUnavailableException("Tracking is down at " + DateTime.Now);

}

if (next < perType * 3)

{

throw new DatabaseIsTiredException("The database wants a break at " + DateTime.Now);

}

if (next < perType * 4)

{

throw new TransientException("Slow down, you move too fast.");

}

if (next < perType * 5)

{

throw new OtherTransientException("Slow down, you move too fast.");

}

}And this to control it remotely in tests or just when doing exploratory manual testing:

private static void MapChaosMonkeyEndpoints(WebApplication app)

{

var group = app.MapGroup("/api/chaos")

.WithTags("Chaos Monkey");

group.MapGet("/", (ChaosMonkeySettings settings) => Results.Ok(settings))

.WithSummary("Get current chaos monkey settings");

group.MapPost("/enable", (ChaosMonkeySettings settings) =>

{

settings.FailureRate = 0.20;

return Results.Ok(new { message = "Chaos monkey enabled at 20% failure rate", settings });

}).WithSummary("Enable chaos monkey with default 20% failure rate");

group.MapPost("/disable", (ChaosMonkeySettings settings) =>

{

settings.FailureRate = 0;

return Results.Ok(new { message = "Chaos monkey disabled", settings });

}).WithSummary("Disable chaos monkey (0% failure rate)");

group.MapPost("/failure-rate/{rate:double}", (double rate, ChaosMonkeySettings settings) =>

{

rate = Math.Clamp(rate, 0, 1);

settings.FailureRate = rate;

return Results.Ok(new { message = $"Failure rate set to {rate:P0}", settings });

}).WithSummary("Set chaos monkey failure rate (0.0 to 1.0)");

group.MapPost("/slow-handler/{ms:int}", (int ms, ChaosMonkeySettings settings) =>

{

ms = Math.Max(0, ms);

settings.SlowHandlerMs = ms;

return Results.Ok(new { message = $"Handler delay set to {ms}ms", settings });

}).WithSummary("Set artificial handler delay in milliseconds (for back pressure testing)");

group.MapPost("/projection-failure-rate/{rate:double}", (double rate, ChaosMonkeySettings settings) =>

{

rate = Math.Clamp(rate, 0, 1);

settings.ProjectionFailureRate = rate;

return Results.Ok(new { message = $"Projection failure rate set to {rate:P0}", settings });

}).WithSummary("Set projection failure rate (0.0 to 1.0)");

}In this case, the Before middleware is just baked into the message handlers, but in your development the “chaos monkey” middleware could be applied only in testing with a Wolverine extension.

And I was probably listening to Simon & Garfunkel when I did the first cut at the chaos monkey: