Hey folks, this is more a brain dump to collect my own thoughts than any kind of tome of accumulated wisdom and experience. Please treat this accordingly, and absolutely chime in on the Critter Stack Discord discussion going on about this right now. I’m also very willing and maybe even likely to change my mind about anything I’m going to say in this post.

There’s been some recent interest and consternation about the combination of Marten within Hot Chocolate as a GraphQL framework. At the same time, I am working with a new JasperFx client who wants to use Hot Chocolate with Marten’s event store functionality behind mutations and projected data behind GraphQL queries.

Long story short, Marten and Hot Chocolate do not mix well without some significant thought and deviation from normal, out of the box Marten usage. Likewise, I’m seeing some significant challenges in using Wolverine behind Hot Chocolate mutations. The rest of this post is a rundown of the issues, sticking points, and possible future ameliorations to make this combination more effective for our various users.

Connections are Sticky in Marten

If you use the out of the box IServiceCollection.AddMarten() mechanism to add Marten into a .NET application, you’re registering Marten’s IQuerySession and IDocumentSession as a Scoped lifetime — which is optimal for usage within short lived ASP.Net Core HTTP requests or within message bus handlers (like Wolverine!). In both of those cases, the session can be expected to have a short lifetime and generally be running in a single thread — which is good because Marten sessions are absolutely not thread safe.

However, for historical reasons (integration with Dapper was a major use case in early Marten usage, so there’s some optimization for that with Marten sessions), Marten sessions have a “sticky” connection lifecycle where an underlying Npgsql connection is retained on the first database query until the session is disposed. Again, if you’re utilizing Marten within ASP.Net Core controller methods or Minimal API calls or Wolverine message handlers or most other service bus frameworks, the underlying IoC container of your application is happily taking care of resource disposal for you at the right times in the request lifecycle.

The last sentence is one of the most important, but poorly understood advantages of using IoC containers in applications in my opinion.

Ponder the following Marten usage:

public static async Task using_marten(IDocumentStore store)

{

// The Marten query session is IDisposable,

// and that absolutely matters!

await using var session = store.QuerySession();

// Marten opens a database connection at the first

// need for that connection, then holds on to it

var doc = await session.LoadAsync<User>("jeremy");

// other code runs, but the session is still open

// just in case...

// The connection is closed as the method exits

// and the session is disposed

}

The problem with Hot Chocolate comes in because Hot Chocolate is trying to parallelize queries when you get multiple queries in one GraphQL request — which since that query batching was pretty well the raison d’être for GraphQL in the first place, so you should assume that’s quite common!

Now, consider a naive usage of a Marten session in a Hot Chocolate query:

public async Task<SomeEntity> GetEntity(

[Service] IQuerySession session

Input input)

{

// load data using the session

}

Without taking some additional steps to serialize access to the IQuerySession across Hot Chocolate queries, you will absolutely hit concurrency errors when Hot Chocolate tries to parallelize data fetching. You can beat this by either forcing Hot Chocolate to serialize access like so:

builder.Services

.AddGraphQLServer()

// Serialize access to the IQuerySession within Hot Chocolate

.RegisterService<IQuerySession>(ServiceKind.Synchronized)

or by making the session lifetime in your container Transient by doing this:

builder.Services

.AddTransient<IQuerySession>(s => s.GetRequiredService<IDocumentStore>().QuerySession());

The first choice will potentially slow down your GraphQL endpoints by serializing access to the IQuerySession while fetching data. The second choice is a non-idiomatic usage of Marten that potentially fouls up usage of Marten within non-GraphQL operations as you could potentially be using separate Marten sessions when you really meant to be using a shared instance.

If you’re really concerned about needing the queries within a single GraphQL request to be parallelized, there’s another option using object pooling like Jackson Cribb wrote about in the Marten Discord room.

For Marten V7, we’re going to strongly consider some kind of query runner that does not have sticky connections for the express purpose of simplifying Hot Chocolate + Marten integration, but I can’t promise any particular timeline for that work. You can track that work here though.

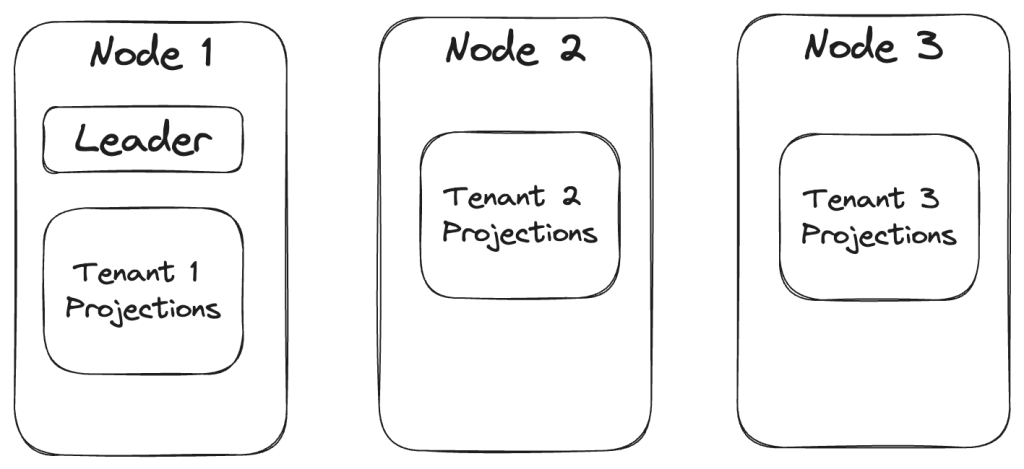

Multi-Tenancy and Session Lifecycles

Multi-Tenancy throws yet another spanner into the works. Consider the following Hot Chocolate query method:

public IQueryable<User> GetUsers(

[Service] IDocumentStore documentStore, [GlobalState] string tenant)

{

using var session = documentStore.LightweightSession(tenant);

return session.Query<User>();

}

Assuming that you’ve got some kind of Hot Chocolate interceptor to detect the tenant id for you, and that value is communicated through Hot Chocolate’s global state mechanism, you might think to open a Marten session directly like the code above. That code above will absolutely not work under any kind of system load because it’s putting you into a damned if you do, damned if you don’t situation. If you dispose the session before this method completes,

the IQueryable execution will throw an ObjectDisposedException when Hot Chocolate tries to execute the query. If you *don’t* dispose the session, the IoC container for the request scope doesn’t know about it, so can’t dispose it for you and Marten is going to be hanging on to the open database connection until garbage collection comes for it — and under a significant load, that means your system will behave very badly when the database connection pool is exhausted!

What we need to do is to have some way that our sessions can be created for the right tenant for the current request, but have the session tracked some how so that the scoped IoC container can be used to clean up the open sessions at the end of the request. As a first pass, I’m using this crude approach first with this service that’s registered with the IoC container with a Scoped lifetime:

/// <summary>

/// This will be Scoped in the container per request, "knows" what

/// the tenant id for the request is. Also tracks the active Marten

/// session

/// </summary>

public class ActiveTenant : IDisposable

{

public ActiveTenant(IHttpContextAccessor contextAccessor, IDocumentStore store)

{

if (contextAccessor.HttpContext is not null)

{

// Try to detect the active tenant id from

// the current HttpContext

var context = contextAccessor.HttpContext;

if (context.Request.Headers.TryGetValue("tenant", out var tenant))

{

var tenantId = tenant.FirstOrDefault();

if (tenantId.IsNotEmpty())

{

this.Session = store.QuerySession(tenant!);

}

}

}

this.Session ??= store.QuerySession();

}

public IQuerySession Session { get; }

public void Dispose()

{

this.Session.Dispose();

}

}

Now, rewrite the Hot Chocolate query from way up above with:

public IQueryable<User> GetUsers(

[Service] ActiveTenant tenant)

{

return tenant.Session.Query<User>();

}

That does still have to be paired with this Hot Chocolate configuration to dodge the concurrent access problems like so:

builder.Services

.AddGraphQLServer()

.RegisterService<ActiveTenant>(ServiceKind.Synchronized)

Hot Chocolate Mutations

I took some time this morning to research Hot Chocolate’s Mutation model (think “writes”). Since my client is using Marten as an event store and I’m me, I was looking for opportunities to:

- Possibly use Wolverine as a true “mediator” within Hot Chocolate mutations to take advantage of the aggregate handler workflow from the Wolverine + Marten integration

- Or find an easy to use equivalent to the aggregate handler workflow within Hot Chocolate Mutation classes

- At a minimum, look for ways to tie in the Wolverine transactional outbox within the Hot Chocolate mutation workflow

What I’ve found so far has been a series of blockers once you zero in on the fact that Hot Chocolate is built around the possibility of having zero to many mutation messages in any one request — and that that request should be treated as a logical transaction such that every mutation should either succeed or fail together. With that being said, I see the blockers as:

- Wolverine doesn’t yet support message batching in any kind of built in way, and is unlikely to do so before a 2.0 release that isn’t even so much as a glimmer in my eyes yet

- Hot Chocolate depends on ambient transactions (Boo!) to manage the transaction boundaries. That by itself almost knocks out the out of the box Marten integration and forces you to use more custom session mechanics to enlist in ambient transactions.

- The existing Wolverine transactional outbox depends on an explicit “Flush” operation after the actual database transaction is committed. That’s handled quite gracefully by Wolverine’s Marten integration in normal issue (in my humble and very biased opinion), but that can’t work across multiple mutations in one GraphQL request

- There is a mechanism to replace. the transaction boundary management in Hot Chocolate, but it was very clearly built around ambient transactions and it has a synchronous signature to commit the transaction. Like any sane server side development framework, Wolverine performs the IO intensive database transactional mechanics and outbox flushing operations with asynchronous methods. To fit that within Hot Chocolate’s transactional boundary abstraction would require calls to turn the asynchronous Marten and Wolverine APIs into synchronous calls with

GetAwaiter().GetResult(), which is tantamount to painting a bullseye on your chest and daring the Fates to not make your application crater with deadlocks under load.

I think at this point, my recommended approach is going to forego integrating Wolverine into Hot Chocolate mutations altogether with some combination of:

- Don’t use Hot Chocolate mutations whatsoever if there’s no need for the operation batching and use old fashioned ASP.Net Core with or without Wolverine’s HTTP support

- Or document a pattern for using the Decider pattern within Hot Chocolate as an alternative to Wolverine’s “aggregate handler” usage. The goal here is to document a way for developers to keep infrastructure out of business logic code and maximize testability

- If using Hot Chocolate mutations, I think there’s a need for a better outbox subscription model directly against Marten’s event store. The approach Oskar outlined here would certainly be a viable start, but I’d rather have an improved version of that built directly into Wolverine’s Marten integration. The goal here is to allow for an Event Driven Architecture which Wolverine supports quite well and the application in question could definitely utilize, but do so without creating any complexity around the Hot Chocolate integration.

In the long, long term:

- Add a message batch processing option to Wolverine that manages transactional boundaries between messages for you

- Have a significant palaver between the Marten/Wolverine core teams and the fine folks behind Hot Chocolate to iron a bit of this out

My Recommendations For Now

Honestly, I don’t think that I would recommend using GraphQL in general in your system whatsoever unless you’re building some kind of composite user interface where GraphQL would be beneficial in reducing chattiness between your user interface and backing service by allowing unrelated components in your UI happily batch up requests to your server. Maybe also if you were using GraphQL as a service gateway to combine disparate data sources on the server side in a consistent way, but even then I wouldn’t automatically use GraphQL.

I’m not knowledgeable enough to say how much GraphQL usage would help speed up your user interface development, so take all that I said in the paragraph above with a grain of salt.

At this point I would urge folks to be cautious about using the Critter Stack with Hot Chocolate. Marten can be used if you’re aware of the potential problems I discussed above. Even when we beat the sticky connection thing and the session lifecycle problems, Marten’s basic model of storing JSON in the database is really not optimized for plucking out individual fields in Select() transforms. While Marten does support Select() transforms, it’s may not as efficient as the equivalent functionality on top of a relational database model would be. It’s possible that GraphQL might be a better fit with Marten if you were primarily using projected read models purposely designed for client consumption through GraphQL or even projecting event data to flat tables that are queried by Hot Chocolate.

Wolverine with Hot Chocolate maybe not so much if you’d have any problems with the transactional boundary issues.

I would be urge you to do load testing with any usage of Hot Chocolate as I think from peeking into its internals that it’s not the most efficient server side tooling around. Again, that doesn’t mean that you will automatically have performance problems with Hot Chocolate, but I think you you should be cautious with its usage.

In general, I’d say that GraphQL creates way too much abstraction over your underlying data storage — and my experience consistently says that abstracted data access can lead to some very poor system performance by both making your application harder to understand and by eliminating the usage of advanced features of your data storage tooling behind least common denominator abstractions.

This took much longer than I wanted it too, as always. I might write a smaller follow up on how I’d theoretically go about building an optimized GraphQL layer from scratch for Marten — which I have zero intension of ever doing, but it’s a fun thought experiment.