TL;DR -> I’m dubious about whether or not the currently popular “modular monolith” idea will actually pay off, but first let’s talk about why we got to this point

The pendulum in software development can frequently swing back and forth between alternatives or extremes as the community struggles to achieve better results or becomes disillusioned with one popular approach or another. It’s easy — especially for older developers like me — to give into cynicism about the hot new idea being exactly the same as something from 5-10 years earlier. That cynicism isn’t necessarily helpful at all because there are often very real and important differences in the new approach that can easily be overlooked if you are jumping to making quick comparisons between the new thing and something familiar.

I’m working with several JasperFx Software clients right now that are either wrestling with brownfield systems they wish were more modular or getting started on large efforts where they can already see the need for modularity. Quite naturally, that brings the concept of “modular monoliths” to the forefront of my mind as a possible approach to creating more maintainable software for my clients.

What Do We Want?

Before any real discussion about the pendulum swinging from monoliths to micro-services and back to modular monoliths, let’s talk a bit about what I think any given software development team really wants from their large codebase:

- The codebase is very easy to “clone n’ go”, meaning that there’s very little friction in being able to configure your development environment to run the code and test suites

- Fast builds, fast test runs, snappy IDE performance. Call it whatever you want, but I want developers to always be working with quick feedback cycles

- The code is easy to reason about. This is absolutely vital in larger, long lived codebases as a way to avoid causing regression bugs that can easily inflict larger systems. It’s also important just to keep teams productive over time

- The ability to upgrade or even replace technologies over time without requiring risky major projects just to focus on technology upgrades that your business folks are very loathe to ever approve

- This one might be just me, but I want a minimum of repetitive code ceremony within the codebase. I’ve routinely seen both monolithic codebases and micro-service strategies fail in some part because of too much repetitive code ceremony cluttering up the code and slowing down development work. The “Critter Stack” tools (Marten & Wolverine) are absolutely built with this low ceremony mantra in mind.

- Any given part of the codebase should be small enough that a single “two pizza” team should be able to completely own that part of the code

Some Non-Technical Stuff That Matters

I work under the assumption that most of us are really professionals and do care about trying to do good work. That being said, even for skilled developers who absolutely care about the quality of their work, there are some common organizational problems that lead to runaway technical debt:

- Micromanagement – Micromanagement crushes anybody’s sense of ownership or incentive to innovate, and developers are no different. As a diehard fan of early 2000’s idealistic Extreme Programming, I of course blame modern Scrum. Bad technical leads or architects can certainly hurt as well though. Even as the most senior technical person on a team, you have to be listening to the concerns of every other team member and allowing new ideas to come from anybody

- Overloaded teams – I think that keeping teams running at near their throughput capacity on new features over timeinevitably leads to overwhelming technical debt that can easily dehabilitate future work in that system. The harsh reality that many business folks don’t understand is that it’s important in the long run for development teams to have some slack time for experimentation or incremental technical debt reduction tasks

- Lack of Trust – This is probably closely related or the root cause of micromanagement, but teams are far more effective when there is a strong trust relationship between the technical folks and the business. Technical teams need to be able to communicate the need to occasionally slow down on feature work to address technical concerns, and have their concerns taken seriously by the business. Of course, as technical folks, we need to work on being able to communicate our concerns in terms of business impact and always seek to maintain the trust level from the business.

Old Fashioned Monoliths

Let’s consider the past couple swings of the pendulum. First there was the simple concept of building large systems as one big codebase. The “monolith” we all fear, even though none of us likely set out to purposely create one. Doing some research today, I saw people describe old fashioned monoliths as problematic because they were in Brian Foote and Joseph Yoder’s immortal words:

A BIG BALL OF MUD is haphazardly structured, sprawling, sloppy, duct-tape and bailing wire, spaghetti code jungle.

Brian Foote and Joseph Yoder’s Big Ball of Mud paper

My recent, very negative experiences with monolithic applications has been quite different though. What I’ve seen is that these monoliths had a consistent structure and clear architectural philosophy, but that the very ideas the teams had originally adopted to help keep the system maintainable were probably part of the causes for why their monolithic application was riddled with technical debt. In my opinion, I think these monolithic codebases have been difficult to work with because of:

- The usage of prescriptive architectures like Clean Architecture or Onion Architecture solution templates that alternatively overcomplicated or failed to manage complexity over time because the teams did not deviate from the prescriptions

- Overly relying on Ports and Adapters type thinking that led teams to introduce many more abstractions as the system grew, and that often leads to code being hard to follow or reason about. Also leads to potentially bad performance out of the sheer number of objects being created. Absolutely leads to poor performance when teams are not able to easily reason about their code’s interactions with databases because of the proliferation of abstractions

- The predominance of layered architecture thinking in big systems means that closely related code is widely spread out over a big codebase

- The difficulty in upgrading technologies over a monolithic codebase sheerly out of the size of the effort — and I partially blame the proliferation of common base types and marker interfaces promoted in the prescriptive Clean/Onion/Hexagonal/Ports & Adapters style architectural guidance

I hit these themes at NDC Oslo 2023 in this talk if you’re interested:

Alright, I think we can all agree on the pain of monolithic applications without enough structure, and we can agree to disagree about my negative opinions about Clean et al Architectures, but let’s move on to micro-services.

Obvious Problems with Micro-Services

I’m still bullish on the long term usefulness of micro-services — or really just “not massive services that contain just one cohesive bounded context and can actually run mostly independent.” But, let’s just acknowledge the very real downsides of micro-services that folks are running into:

- Distributed development is hard, full stop. And micro-services inevitably mean more distributed development, debugging, and monitoring

- It’s hard to get the service boundaries right in a complex business system, and it’s disastrous when you don’t get the boundaries right. If your team continuously finds itself having to frequently change multiple services at the same time, your boundaries are clearly wrong and you’re doing shotgun surgery — but it’s worse because you may be having to make changes in completely separate codebases and code repositories.

- Again, if the boundaries aren’t quite right, you can easily get into a situation where the services have to be chatty with each other and send a lot more messages. That’s a recipe for poor performance and brittle systems.

- Testing can be a lot harder if you absolutely need to do more testing between services rather than being able to depend on testing one service at a time

I’ve also seen high ceremony approaches completely defeat micro-service strategies by simply adding too much overhead to splitting up a codebase to gain any net value from splitting up the previous monolith. Again, I’m team low ceremony in almost any circumstance.

So what about Modular Monoliths?

Monoliths have been problematic, then micro-services turned out to be differently problematic. So let’s swing the pendulum back partway but focus more on making our monoliths modular for easier, more maintainable long term development. Great theory, and it’s spawning a lot of software conference talks and sage chin wagging.

This gets us to “Modular Monolith” idea that’s popular now, but I’m unfortunately dubious about the mechanics and whether or not this is just some “modular” lipstick on the old “monolith” pig.

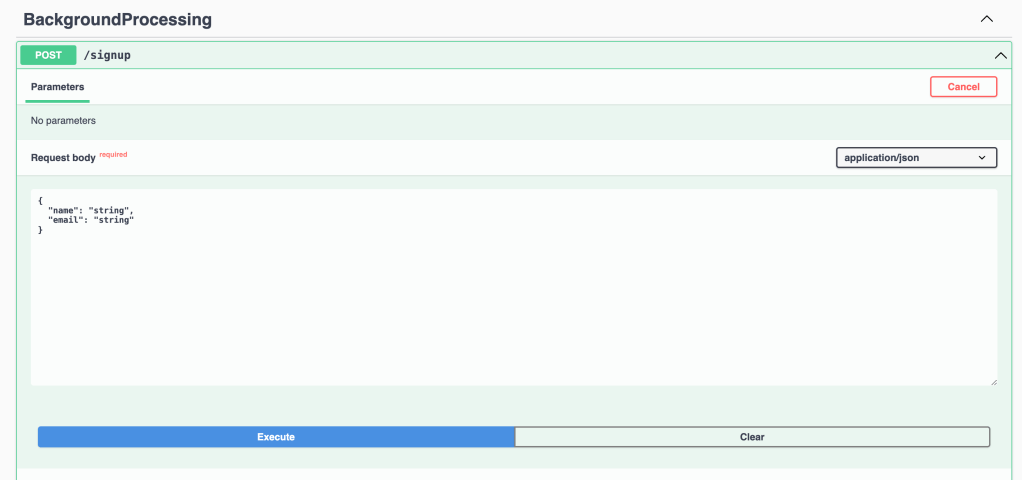

In my next post, I’m going to try to describe my concerns and thoughts about how a “modular monolith” architecture might actually work out. I’m also concerned about how well both Marten and Wolverine are going to play within a modular monolith, and I’d like to get into some nuts and bolts about how those tools work now and how they maybe need to change to better accommodate the “modular monolith” idea.