Hey, JasperFx Software is more than just some silly named open source frameworks. We’re also deeply experienced in test driven development, designing for testability, and making test automation work without driving into the ditch with over dependence on slow, brittle Selenium testing. Hit us up about what we could do to help you be more successful in your own test automation or TDD efforts.

I have been working furiously on getting an incremental Wolverine release out this week, with one of the new shiny features being end to end support for multi-tenancy (the work in progress GitHub issue is here) through Wolverine.Http endpoints. I hit a point today where I have to admit that I can’t finish that work today, but did see the potential for a blog post on the Alba library (also part of JasperFx’s OSS offerings) and how I was using Alba today to write integration tests for this new functionality, show how the sausage is being made, and even work in a test-first manner.

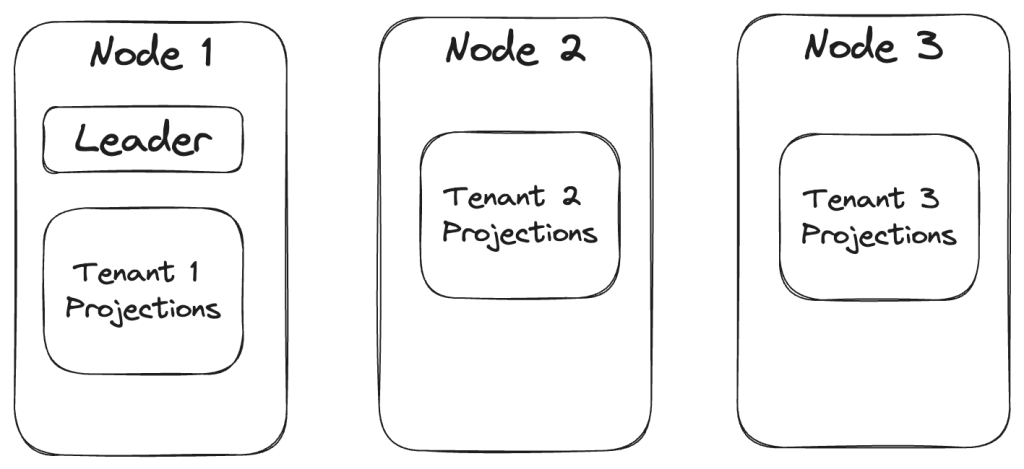

To put the desired functionality in context, let’s say that we’re building a “Todo” web service using Marten for persistence. Moreover, we’re expecting this system to have a massive number of users and want to be sure to isolate data between customers, so we plan on using Marten’s support for using a separate database for each tenant (think user organization in this case). Within that “Todo” system, let’s say that we’ve got a very simple web service endpoint to just serve up all the completed Todo documents for the current tenant like this one:

[WolverineGet("/todoitems/{tenant}/complete")]

public static Task<IReadOnlyList<Todo>> GetComplete(IQuerySession session)

=> session

.Query<Todo>()

.Where(x => x.IsComplete)

.ToListAsync();

Now, you’ll notice that there is a route argument named “tenant” that isn’t consumed at all by this web api endpoint. What I want Wolverine to do in this case is to infer that the value of that “tenant” value within the route is the current tenant id for the request, and quietly select the correct Marten tenant database for me without me having to write a lot of repetitive code.

Just a note, all of this is work in progress and I haven’t even pushed the code at the time of writing this post. Soon. Maybe tomorrow.

Stepping into the bootstrapping for this web service, I’m going to add these new lines of code to the Todo web service’s Program file to teach Wolverine.HTTP how to handle multi-tenancy detection for me:

// Let's add in Wolverine HTTP endpoints to the routing tree

app.MapWolverineEndpoints(opts =>

{

// Letting Wolverine HTTP automatically detect the tenant id!

opts.TenantId.IsRouteArgumentNamed("tenant");

// Assert that the tenant id was successfully detected,

// or pull the rip cord on the request and return a

// 400 w/ ProblemDetails

opts.TenantId.AssertExists();

});

So that’s some of the desired, built in multi-tenancy features going into Wolverine.HTTP 1.7 some time soon. Back to the actual construction of these new features and how I used Alba this morning to drive the coding.

I started by asking around on social media about what other folks used as strategies to detect the tenant id in ASP.Net Core multi-tenancy, and came up with this list (plus a few other options):

- Use a custom request header

- Use a named route argument

- Use a named query string value (I hate using the query string myself, but like cockroaches or scorpions in our Central Texas house, they always sneak in somehow)

- Use an expected Claim on the

ClaimsPrincipal - Mix and match the strategies above because you’re inevitably retrofitting this to an existing system

- Use sub domain names (I’m arbitrarily skipping this one for now just because it was going to be harder to test and I’m pressed for time this week)

Once I saw a little bit of consensus on the most common strategies (and thank you to everyone who responded to me today), I jotted down some tasks in GitHub-flavored markdown (I *love* this feature) on what the configuration API would look like and my guesses for development tasks:

- [x] `WolverineHttpOptions.TenantId.IsRouteArgumentNamed("foo")` -- creates a policy

- [ ] `[TenantId("route arg")]`, or make `[TenantId]` on a route parameter for one offs. Will need to throw if not a route argument

- [x] `WolverineHttpOptions.TenantId.IsQueryStringValue("key") -- creates policy

- [x] `WolverineHttpOptions.TenantId.IsRequestHeaderValue("key") -- creates policy

- [x] `WolverineHttpOptions.TenantId.IsClaimNamed("key") -- creates policy

- [ ] New way to add custom middleware that's first inline

- [ ] Documentation on custom strategies

- [ ] Way to register the "preprocess context" middleware methods

- [x] Middleware or policy that blows it up with no tenant id detected. Use ProblemDetails

- [ ] Need an attribute to opt into tenant id is required, or tenant id is NOT required on certain endpoints

Knowing that I was going to need to quickly stand up different configurations of a test web service’s IHost, I started with this skeleton that I hoped would make the test setup relatively easy:

public class multi_tenancy_detection_and_integration : IAsyncDisposable, IDisposable

{

private IAlbaHost theHost;

public void Dispose()

{

theHost.Dispose();

}

// The configuration of the Wolverine.HTTP endpoints is the only variable

// part of the test, so isolate all this test setup noise here so

// each test can more clearly communicate the relationship between

// Wolverine configuration and the desired behavior

protected async Task configure(Action<WolverineHttpOptions> configure)

{

var builder = WebApplication.CreateBuilder(Array.Empty<string>());

builder.Services.AddScoped<IUserService, UserService>();

// Haven't gotten around to it yet, but there'll be some end to

// end tests in a bit from the ASP.Net request all the way down

// to the underlying tenant databases

builder.Services.AddMarten(Servers.PostgresConnectionString)

.IntegrateWithWolverine();

// Defaults are good enough here

builder.Host.UseWolverine();

// Setting up Alba stubbed authentication so that we can fake

// out ClaimsPrincipal data on requests later

var securityStub = new AuthenticationStub()

.With("foo", "bar")

.With(JwtRegisteredClaimNames.Email, "guy@company.com")

.WithName("jeremy");

// Spinning up a test application using Alba

theHost = await AlbaHost.For(builder, app =>

{

app.MapWolverineEndpoints(configure);

}, securityStub);

}

public async ValueTask DisposeAsync()

{

// Hey, this is important!

// Make sure you clean up after your tests

// to make the subsequent tests run cleanly

await theHost.StopAsync();

}

Now, the intermediate step of tenant detection even before Marten itself gets involved is to analyze the HttpContext for the current request, try to derive the tenant id, then set the MessageContext.TenantId in Wolverine for this current request — which Wolverine’s Marten integration will use a little later to create a Marten session pointing at the correct database for that tenant.

Just to measure the tenant id detection — because that’s what I want to build and test first before even trying to put everything together with a real database too — I built these two simple GET endpoints with Wolverine.HTTP:

public static class TenantedEndpoints

{

[WolverineGet("/tenant/route/{tenant}")]

public static string GetTenantIdFromRoute(IMessageBus bus)

{

return bus.TenantId;

}

[WolverineGet("/tenant")]

public static string GetTenantIdFromWhatever(IMessageBus bus)

{

return bus.TenantId;

}

}

That folks is the scintillating code that brings droves of readership to my blog!

Alright, so now I’ve got some support code for the “Arrange” and “Assert” part of my Arrange/Act/Assert workflow. To finally jump into a real test, I started with detecting the tenant id with a named route pattern using Alba with this code:

[Fact]

public async Task get_the_tenant_id_from_route_value()

{

// Set up a new application with the desired configuration

await configure(opts => opts.TenantId.IsRouteArgumentNamed("tenant"));

// Run a web request end to end in memory

var result = await theHost.Scenario(x => x.Get.Url("/tenant/route/chartreuse"));

// Make sure it worked!

// ZZ Top FTW! https://www.youtube.com/watch?v=uTjgZEapJb8

result.ReadAsText().ShouldBe("chartreuse");

}

The code itself is a little wonky, but I had that quickly working end to end. I next proceeded to the query string strategy like this:

[Fact]

public async Task get_the_tenant_id_from_the_query_string()

{

await configure(opts => opts.TenantId.IsQueryStringValue("t"));

var result = await theHost.Scenario(x => x.Get.Url("/tenant?t=bar"));

result.ReadAsText().ShouldBe("bar");

}

Hopefully you can see from the two tests above how that configure() method already helped me quickly write the next test. Sometimes — but not always so be careful with this — the best thing you can do is to first invest in a test harness that makes subsequent tests be more declarative, quicker to write mechanically, and easier to read later.

Next, let’s go to the request header strategy test:

[Fact]

public async Task get_the_tenant_id_from_request_header()

{

await configure(opts => opts.TenantId.IsRequestHeaderValue("tenant"));

var result = await theHost.Scenario(x =>

{

x.Get.Url("/tenant");

// Alba is helping set up the request header

// for me here

x.WithRequestHeader("tenant", "green");

});

result.ReadAsText().ShouldBe("green");

}

Easy enough, and hopefully you see how Alba helped me get the preconditions into the request quickly in that test. Now, let’s go for a little more complicated test where I first ran into a little trouble and work with the Claim strategy:

[Fact]

public async Task get_the_tenant_id_from_a_claim()

{

await configure(opts => opts.TenantId.IsClaimTypeNamed("tenant"));

var result = await theHost.Scenario(x =>

{

x.Get.Url("/tenant");

// Add a Claim to *only* this request

x.WithClaim(new Claim("tenant", "blue"));

});

result.ReadAsText().ShouldBe("blue");

}

I hit a little friction at first because I didn’t have Alba set up exactly right at first, but since Alba runs your application code completely within process, it was very quick to step right into the code and figure out why the code wasn’t working at first (I’d forgotten to set up the SecurityStub shown above). Refreshing my memory on how Alba’s Security Extensions worked, I was able to get going again. Arguably, Alba’s ability to fake out or even work with your application’s security in tests is its best features.

So that’s been a lot of “happy path” tests, so now let’s break things by specifying Wolverine’s new behavior to validate that a request has a valid tenant id with these two new tests. First, a happy path:

[Fact]

public async Task require_tenant_id_happy_path()

{

await configure(opts =>

{

opts.TenantId.IsQueryStringValue("tenant");

opts.TenantId.AssertExists();

});

// Got a 200? All good!

await theHost.Scenario(x =>

{

x.Get.Url("/tenant?tenant=green");

});

}

Note that Alba would cause a test failure if the web request did not return a 200 status code.

And to lock down the binary behavior, here’s the “sad path” where Wolverine should be returning a 400 status code with ProblemDetails data:

[Fact]

public async Task require_tenant_id_sad_path()

{

await configure(opts =>

{

opts.TenantId.IsQueryStringValue("tenant");

opts.TenantId.AssertExists();

});

var results = await theHost.Scenario(x =>

{

x.Get.Url("/tenant");

// Tell Alba we expect a non-200 response

x.StatusCodeShouldBe(400);

});

// Alba's helpers to deserialize JSON responses

// to a strong typed object for easy

// assertions

var details = results.ReadAsJson<ProblemDetails>();

// I like to refer to constants in test assertions sometimes

// so that you can tweak error messages later w/o breaking

// automated tests. And inevitably regret it when I

// don't do this

details.Detail.ShouldBe(TenantIdDetection

.NoMandatoryTenantIdCouldBeDetectedForThisHttpRequest);

}

To be honest, it took me a few minutes to get the test above to pass because of some internal middleware mechanics I didn’t expect. As usual. All the same though, Alba helped me drive the code through “outside in” tests that ran quickly so I could iterate rapidly.

As always, I use Jeremy’s Only Law of Testing to decide on a mix of solitary or socialable tests in any particular scenario.

A bit about Alba

Alba itself is a descendant of some very old test helper code in FubuMVC, then was ported to OWIN (RIP, but I don’t miss you), then to early ASP.Net Core, and finally rebuilt as a helper around ASP.Net Core’s. built in TestServer and WebApplicationFactory. Alba has been continuously used for well over a decade now. If you’re looking for selling points for Alba, I’d say:

- Alba makes your integration tests more declarative

- There are quite a few helpers for common repetitive tasks in integration tests like reading JSON data with the application’s built in serialization

- Simplifies test setup

- It runs completely in memory where you can quickly spin up your application and jump right into debugging when necessary

- Testing web services with Alba is much more efficient and faster than trying to do the same thing through inevitably slow, brittle, and laborious Selenium/Playwright/Cypress testing