Hey, did you know that JasperFx Software is ready for formal support plans for Marten and Wolverine? Not only are we trying to make the “Critter Stack” tools be viable long term options for your shop, we’re also interested in hearing your opinions about the tools and how they should change. We’re also certainly open to help you succeed with your software development projects on a consulting basis whether you’re using any part of the Critter Stack or any other .NET server side tooling.

Let’s build a small web service application using the whole “Critter Stack” and their friends, one small step at a time. For right now, the “finished” code is at CritterStackHelpDesk on GitHub.

The posts in this series are:

- Event Storming

- Marten as Event Store

- Marten Projections

- Integrating Marten into Our Application

- Wolverine as Mediator

- Web Service Query Endpoints with Marten

- Dealing with Concurrency

- Wolverine’s Aggregate Handler Workflow FTW!

- Command Line Diagnostics with Oakton (this post)

- Integration Testing Harness

- Marten as Document Database

- Asynchronous Processing with Wolverine

- Durable Outbox Messaging and Why You Care!

- Wolverine HTTP Endpoints

- Easy Unit Testing with Pure Functions

- Vertical Slice Architecture

- Messaging with Rabbit MQ

- The “Stateful Resource” Model

- Resiliency

Hey folks, I’m deviating a little bit from the planned order and taking a side trip while we’re finishing up a bug fix release to address some OpenAPI generation hiccups before I go on to Wolverine HTTP endpoints.

Admittedly, Wolverine and to a lesser extent Marten have a bit of a “magic” conventional approach. They also depend on external configuration items, external infrastructural tools like databases or message brokers that require their own configuration, and there’s always the possibility of assembly mismatches from users doing who knows what with their Nuget dependency tree.

To help unwind potential problems with diagnostic tools and to facilitate environment setup, the “Critter Stack” uses the Oakton library to integrate command line utilities right into your application.

Applying Oakton to Your Application

To get started, I’m going right back to the Program entry point of our incident tracking help desk application and adding just a couple lines of code. First, Oakton is a dependency of Wolverine, so there’s no additional dependency to add, but we’ll add a using statement:

using Oakton;

This is optional, but we’ll possibly want the extra diagnostics, so I’ll add this line of code near the top:

// This opts Oakton into trying to discover diagnostics

// extensions in other assemblies. Various Critter Stack

// libraries expose extra diagnostics, so we want this

builder.Host.ApplyOaktonExtensions();

and finally, I’m going to drop down to the last line of Program and replace the typical app.Run(); code with the command line parsing with Oakton:

// This is important for Wolverine/Marten diagnostics

// and environment management

return await app.RunOaktonCommands(args);

Do note that it’s important to return the exit code of the command line runner up above. If you choose to use Oakton commands in a build script, returning a non zero exit code signals the caller that the command failed.

Command Line Mechanics

Next, I’m going to open a command prompt to the root directory of the HelpDesk.Api project, and use this to get a preview of the command line options we now have:

dotnet run -- help

That should render some help text like this:

Alias Description

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

check-env Execute all environment checks against the application

codegen Utilities for working with JasperFx.CodeGeneration and JasperFx.RuntimeCompiler

db-apply Applies all outstanding changes to the database(s) based on the current configuration

db-assert Assert that the existing database(s) matches the current configuration

db-dump Dumps the entire DDL for the configured Marten database

db-patch Evaluates the current configuration against the database and writes a patch and drop file if there are any differences

describe Writes out a description of your running application to either the console or a file

help List all the available commands

marten-apply Applies all outstanding changes to the database based on the current configuration

marten-assert Assert that the existing database matches the current Marten configuration

marten-dump Dumps the entire DDL for the configured Marten database

marten-patch Evaluates the current configuration against the database and writes a patch and drop file if there are any differences

projections Marten's asynchronous projection and projection rebuilds

resources Check, setup, or teardown stateful resources of this system

run Start and run this .Net application

storage Administer the Wolverine message storage

So that’s a lot, but let’s just start by explaining the basics of the command line for .NET applications. You can both pass arguments and flags to the dotnet application itself, and also to the application’s Program.Main(params string[] args) command. The key thing to know is that dotnet arguments and flags are segregated from the application’s arguments and flags by a double dash “–” separator. So for example the command, dotnet run --framework net8.0 -- codegen write is sending the framework flag to dotnet run, and the codegen write arguments to the application itself.

Stateful Resource Setup

Skipping a little bit to the end state of our help desk API project, we’ll have dependencies on:

- Marten schema objects in the PostgreSQL database

- Wolverine schema objects in PostgreSQL database (for the transactional inbox/outbox we’ll introduce later in this series)

- Rabbit MQ exchanges for Wolverine to broadcast to later

One of the guiding philosophies of the Critter Stack is to minimize the “Time to Login Screen” (hat tip to Chad Myers) quality of your codebase. What this means is that we really want a new developer to our system (or a developer coming back after a long, well deserved vacation) to do a clean clone of our codebase, and very quickly be able to run the application and any integration tests end to end. To that end, Oakton exposes its “Stateful Resource” model as an adapter for tools like Marten and Wolverine to set up their resources to match their configuration.

Pretend just for a minute that you have all the necessary rights and permissions to configure database schemas and Rabbit MQ exchanges, queues, and bindings on whatever your Rabbit MQ broker is for development. Assuming that, you can have your copy of the help desk API completely up and ready to run through these steps at the command prompt starting at wherever you want the code to be:

git clone https://github.com/JasperFx/CritterStackHelpDesk.git

cd CritterStackHelpDesk

docker compose up -d

cd HelpDesk.Api

dotnet run -- resources setup

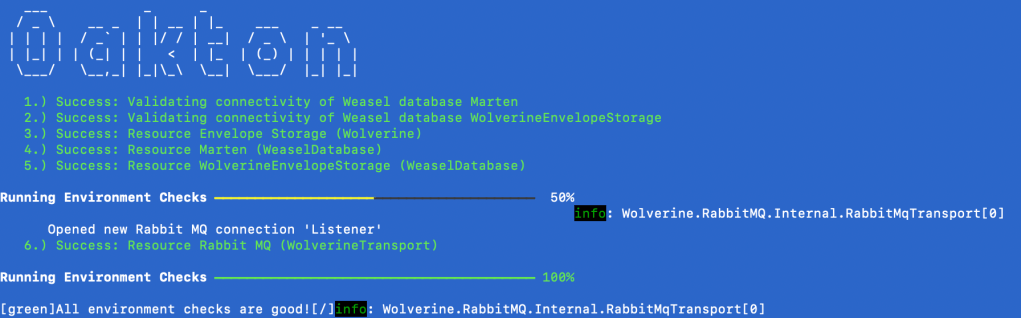

At the end of those calls, you should see this output:

The dotnet run -- resources setup command is able to do Marten database migrations for its event store storage and any document types it knows about upfront, the Wolverine envelope storage tables we’ll configure later, and the known Rabbit MQ exchange where we’ll configure for broadcasting integration events later.

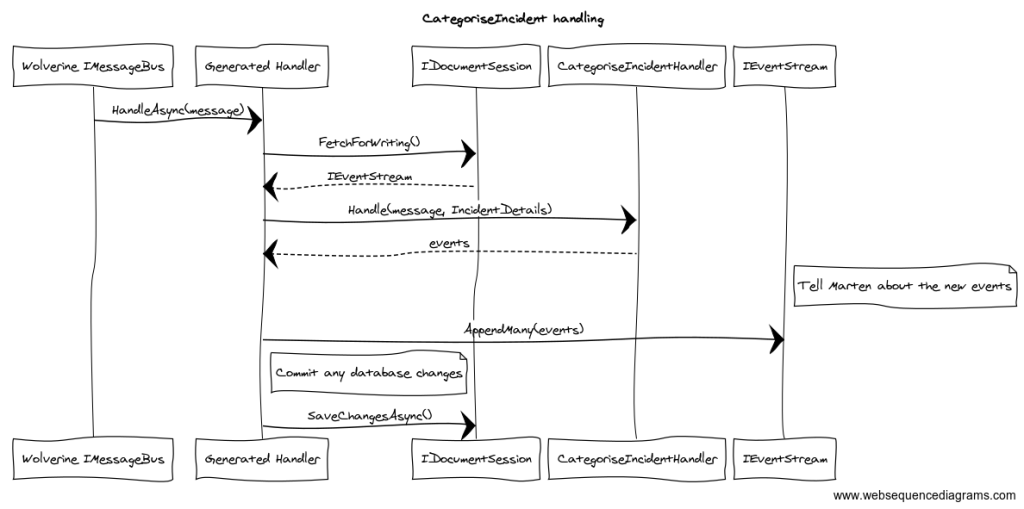

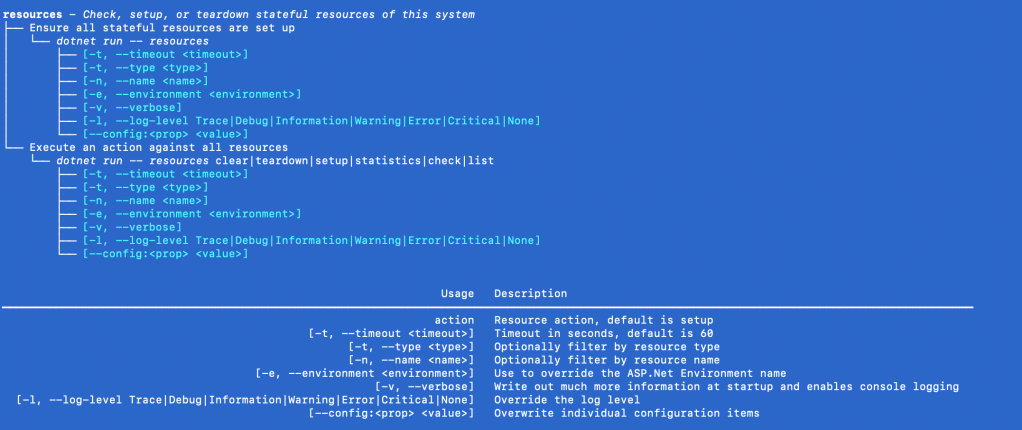

The resources command has other options as shown below from dotnet run -- help resources:

You may need to pause a little bit between the call to docker compose and dotnet run to let Docker catch up!

Environment Checks

Years ago I worked on an early .NET system that still had a lot of COM dependencies that needed to be correctly registered outside of our application and used a shared database that was indifferently maintained as was common way back then. Needless to say, our deployments were chaotic as we never knew what shape the server was in when we deployed. We finally beat our deployment woes by implementing “environment tests” to our deployment scripts that would test out the environment dependencies (is the COM server there? can we connect to the database? is the expected XML file there?) and fail fast with descriptive messages when the server was in a crap state as we tried to deploy.

To that end, Oakton has its environment check model that both Marten and Wolverine utilize. In our help desk application, we already have a Marten dependency, so we know the application will not function correctly if either the database is unavailable or the connection string in the configuration just happens to be wrong or there’s a security set up issue or you get the picture.

So, picking up our application with every bit of infrastructure purposely turned off, I’ll run this command:

dotnet run -- check-env

and the result is a huge blob of exception text and the command will fail — allowing you to abort a build script that might be delegating to this command:

Next, I’m going to turn on all the infrastructure (and set up everything to match our application’s configuration with the second command) with a quick call to:

docker compose up -d

dotnet run -- resources setup

Now, I can run the environment checks again and get a green bill of health for our system:

Oakton’s environment check model predates the new .NET IHealthCheck model. Oakton will also support that model soon, and you can track that work here.

“Describe” Our System

Oakton’s describe command can give you some insights into your application, and tools like Marten or Wolverine can expose extensions to this model for further output. By typing this command at the project root:

dotnet run -- describe

We’ll get some basic information about our system like this preview of the configuration:

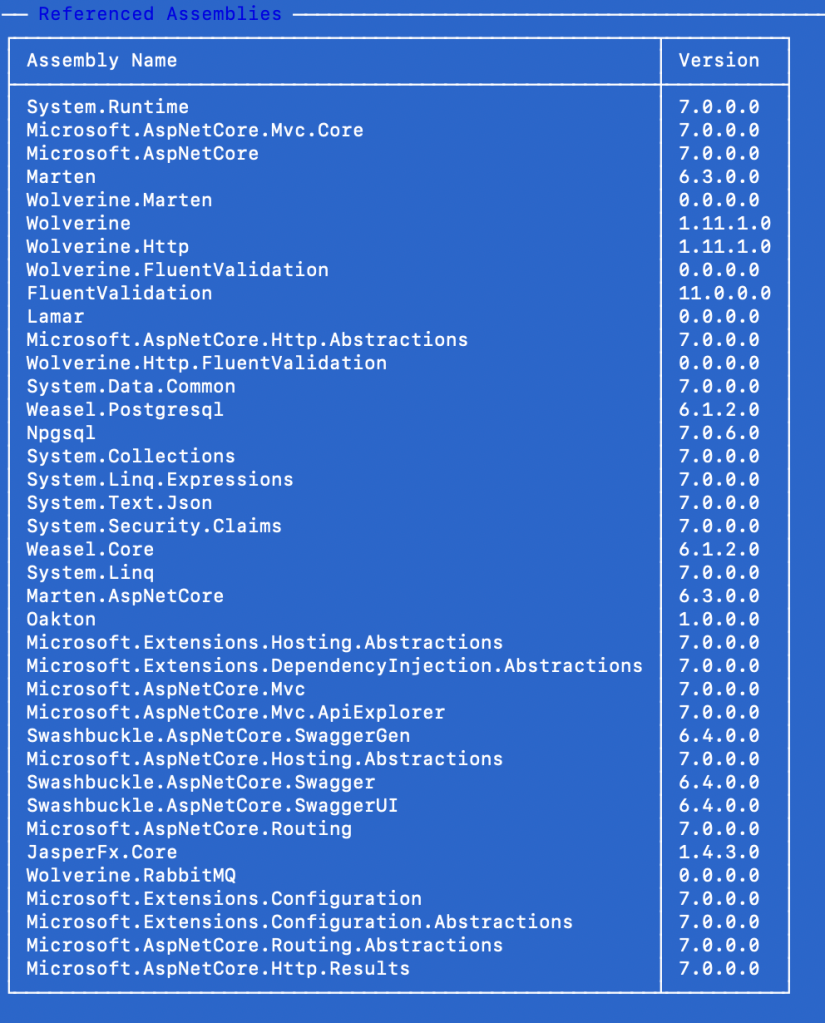

The loaded assemblies because you will occasionally get burned by unexpected Nuget behavior pulling in the wrong versions:

And sigh, because folks have frequently had some trouble understanding how Wolverine does its automatic handler discovery, we have this preview:

And quite a bit more information including:

- Wolverine messaging endpoints

- Wolverine’s local queues

- Wolverine message routing

- Wolverine exception handling policy configuration

Summary and What’s Next

Oakton is yet another command line parsing tool in .NET, of which there are at least dozens that are perfectly competent. What makes Oakton special though is its ability to add command line tools directly to the entry point of your application where you already have all your infrastructure configuration available. The main point I hope you take away from this is that the command line tooling in the “Critter Stack” can help your team development faster through the diagnostics and environment management features.

The “Critter Stack” is heavily utilizing Oakton’s extensibility model for:

- The static description of the application configuration that may frequently be helpful for troubleshooting or just understanding your system

- Stateful resource management of development dependencies like databases and message brokers. So far this is supported for Marten, both PostgreSQL and Sql Server dependencies of Wolverine, Rabbit MQ, Kafka, Azure Service Bus, and AWS SQS

- Environment checks to test out the validity of your system and its ability to connect to external resources during deployment or during development

- Any other utility you care to add to your system like resetting a baseline database state, adding users, or anything you care to do through Oakton’s command extensibility

As for what’s next, you’ll have to let me see when some bug fix releases get in place before I promise what exactly is going to be next in this series. I expect this series to at least go to 15-20 entries as I introduce more Wolverine scenarios, messaging, and quite a bit about automated testing. And also, I take requests!

If you’re curious, the JasperFx GitHub organization was originally conceived of as the reboot of the previous FubuMVC ecosystem, with the main project being “Jasper” and the smaller ancillary tools ripped out of the flotsam and jetsam of StructureMap and FubuMVC arranged around what was then called “Jasper,” which was named for my hometown. The smaller tools like Oakton, Alba, and Lamar are named after other small towns close to the titular Jasper, MO. As Marten took off and became by far and away the most important tool in our stable, we adopted the “Critter Stack” naming them as we pulled out Weasel into its own library and completely rebooted and renamed “Jasper” as Wolverine to be a natural complement to Marten.

And lastly, I’m not even sure that Oakton, MO will even show up on maps because it’s effectively a Methodist Church, a cemetery, the ruins of the general store, and a couple farm houses at a cross roads. In Missouri at least, towns cease to exist when they lose their post office. The area I grew up in is littered with former towns that fizzled out as the farm economy changed and folks moved to bigger towns later.